I spend a lot of time thinking about what new technology can actually do inside an organization, and I don’t just mean in terms of theory, or demos, or headlines. I’m talking about the reality of how people work. That’s why I’ve become so careful when a new term or technology suddenly starts showing up everywhere.

Right now, that term is AI agents.

It feels like agents are in every keynote, every roadmap conversation, every product launch, and every executive discussion about what comes next. Depending on who’s talking, they’re either the next major leap in enterprise AI or proof that your organization is already behind. Whenever that happens, I find it useful to slow down.

It’s not because the technology isn't real or because it won’t matter. It is, and it will. Hype has a way of flattening nuance. It makes everything sound more mature, more autonomous, and more inevitable than it really is. And that’s when organizations start making bad decisions, either by dismissing something important or by rushing into it without enough clarity.

Jennifer Cannizzaro, Responsive’s VP of Product Marketing, shared a story that captured this perfectly during the latest episode of The Responsive Podcast. She was at an event where 100 people were asked who was actively building and using AI agents. Two hands went up. When asked who was expected to build and adopt agents, nearly every hand went up. That gap says a lot.

There’s pressure to move. There’s curiosity. There’s urgency. But there’s also a lot of uncertainty about what agents really are, where they fit, and how to use them without creating more risk than value. That’s the part I want to focus on.

I don’t think the answer is to lean harder into the hype. Instead, I think the answer is to get practical. Start with what AI agents actually are. Then get clear on where organizations should begin. Then be honest about what tends to go wrong. So, let’s start there.

What actually are AI agents, and why the definition remains blurry

One of the first problems with AI agents is that the term has become too loose. Right now, there are so many different things being described the same way. A chatbot is called an agent. A workflow automation tool is called an agent. A prompt template is called an agent. At that point, the term stops being useful.

There’s a lot of confusion, and there’s a lot of agent washing happening right now. So when I think about what qualifies as an agent, I come back to a fairly simple standard.

For a system to be an agent, it needs to understand its task.

- Agents need some decision-making capability.

- Agents need to decide what path to take based on the situation.

- Agents need access to tools that let it interact with the external world, not just generate output.

A useful AI tool can help you produce content, summarize information, or answer a question. An agent goes further. An agent works toward an outcome. It makes choices, takes action, and adjusts based on the available context.

That’s why I like using the Roomba vacuum as an example of the first AI agent. A Roomba knows its task is to clean the floor, and it can even plan its path around obstacles. In most cases, it knows when it’s near the stairs and turns around. That’s not just a machine following one static instruction. The Roomba is navigating toward a goal and making decisions along the way.

The same principle applies in the enterprise. Take customer support as an example. A customer support system starts to look like an agent if:

- It can understand what a customer is asking

- Decide whether the request is routine or sensitive

- Retrieve the right information

- Determine whether it can resolve the issue directly

- Know when to escalate a problem

That’s a lot of conditions, but meeting all of them represents a meaningful leap from a basic AI assistant to an AI agent. Once teams understand that distinction, something important begins to happen: they stop trying to force agents into every problem. They start realizing that in some cases, an agent is the right answer. In many others, a simpler AI-assisted workflow is enough.

That clarity matters more than the label.

Where organizations should start (and why to start with the boring stuff)

When organizations first start talking about AI agents, there’s a strong temptation to aim for the most ambitious use case in the room. That’s understandable as the promise of agents is exciting and far-reaching. If you believe the market narrative that dominated most of 2025, you’re one deployment away from fully autonomous systems transforming how work gets done.

I don’t think that’s the right mindset for most teams, especially given how agents actually played out over the past year. If I were advising an organization on where to begin, I would suggest starting with something simple.

The best starting points tend to be tasks that are repeatable, high volume, clearly defined, auditable, and reversible. They are the kinds of workflows where you can insert AI without creating a large blast radius if something goes wrong. And ideally, they’re workflows that make human oversight easy to maintain. It’s just good operating discipline.

One of the most overlooked truths here is that many organizations may not need an agent as the first step. In many environments, it makes more sense to start with an AI-assisted workflow. Get teams comfortable retrieving information faster, triaging requests more effectively, and generating first drafts for review. That’s real progress that can transition easily to agents.

When looking at a support team, you don’t need to try to automate everything at once. Start with the top 10 questions that come in over and over again, the kind of questions that you already have clear answers to. Automate those and wait for the early results.

This advice works for support. It works for internal knowledge retrieval. It works for early-stage document triage. It works for certain response workflows where a high-quality first draft saves time, but a human should always make the final call.

This is one of the places where I think leaders sometimes get the sequence wrong. They focus only on whether the technology is ready. But in the early stages, the more important question is often whether the organization is ready.

- Are your workflows clear enough?

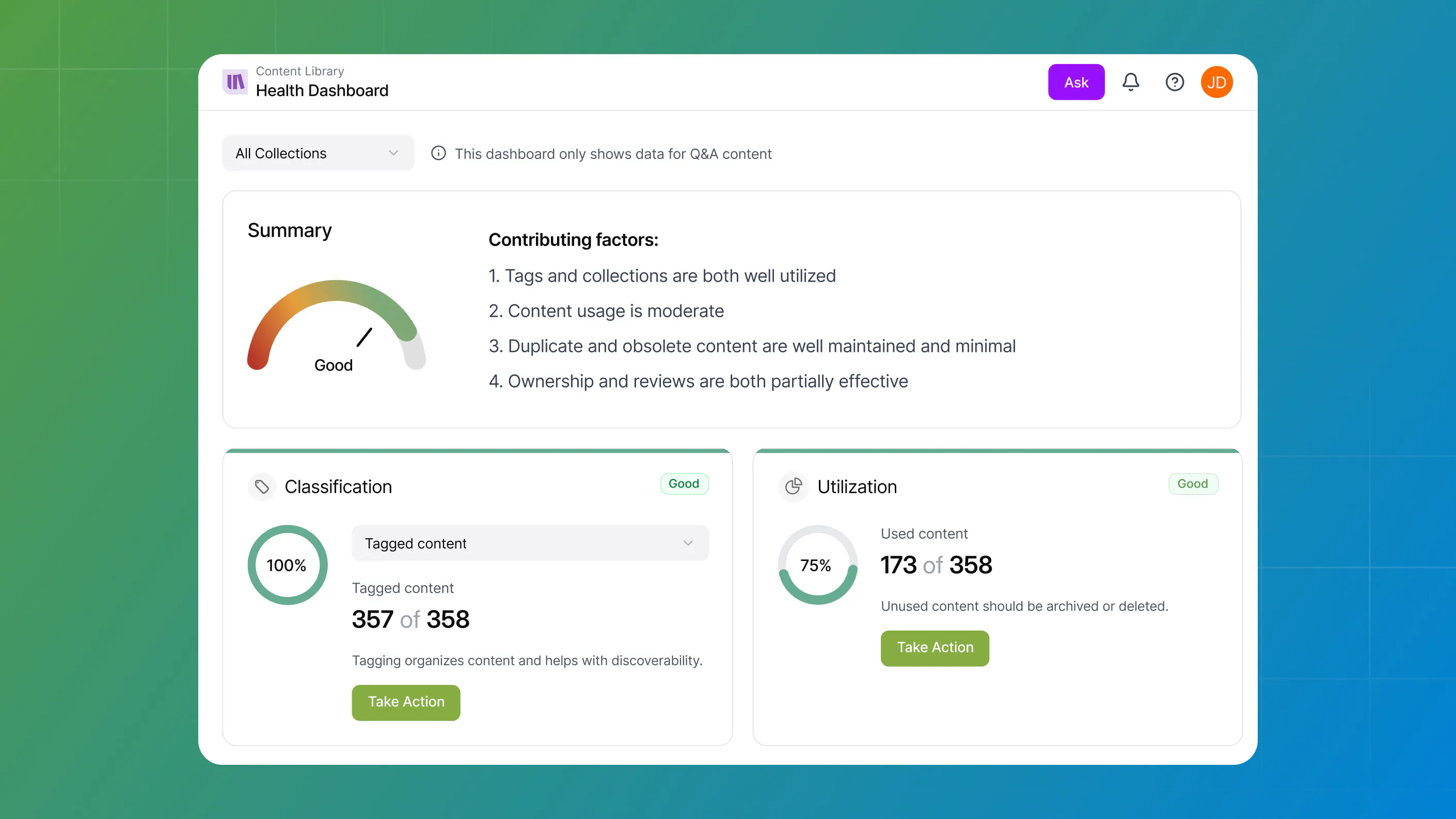

- Is your knowledge base trusted enough?

- Do your teams understand where human review belongs?

- Do they know what to do when the system is helpful, and what to do when it isn’t?

These are the important questions to ask on the path to adoption.

This is why early experimentation matters. It’s not because every pilot becomes a scaled deployment, but because the organization learns where the risks are, where the process needs to tighten, what people are comfortable with, and how to separate novelty from utility.

That’s what I’d look for in a first step with AI agents. Look for the real signals, not the “big reveal.”

What organizations should avoid (where hype turns into disappointment)

Once teams move from curiosity into implementation, the failure modes become pretty predictable. In my experience, three mistakes keep showing up during implementation.

Don’t confuse more autonomy with more value

There’s a lot of energy in the market around fully autonomous agents, systems you can turn loose on broad business problems and trust to figure it out. That’s the main appeal of agents for so many people, but it’s also where a lot of the fear comes from.

When thinking about what you’d trust an agent to do autonomously, I use a simple analogy: would you bring in a new employee, give them a high-stakes task with serious consequences, and then walk away with no oversight? If it’s a low-risk task, the answer might actually be yes.

But there’s no chance the answer would be yes if the decision were critical, irreversible, or compliance-sensitive. I think that’s the right gut check. The goal for AI agents is not maximum autonomy. Rather, the goal should be appropriate autonomy.

In some workflows, an agent can act with a fair amount of independence. In others, the right design is tighter guardrails, clearer checkpoints, and a human in the loop for oversight and review. There’s no prize for removing people faster than the use case can support.

Don’t point agents at messy knowledge and expect good outcomes

This is probably the most underappreciated problem in enterprise AI. People often assume that if the model is strong enough, it will sort out whatever context you give it. That’s not how this works. If your system is pulling from conflicting instructions, outdated content, or unclear process documentation, the output will reflect that confusion.

If you point an agent to enterprise data and you have four different versions of the standard operating procedure, a human will get confused, and so will an agent.

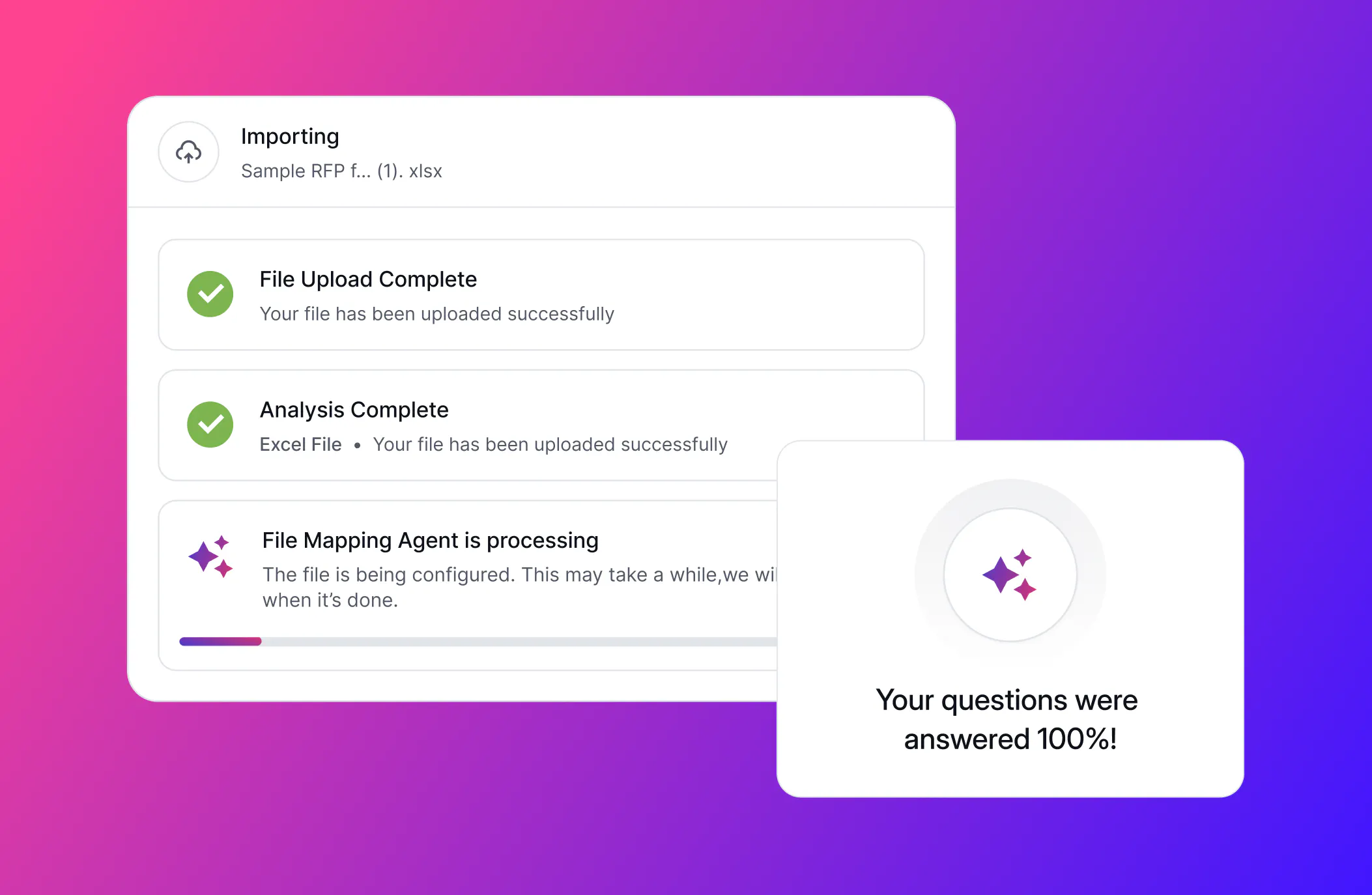

That’s why I keep coming back to effective knowledge management. Agents need clean instructions. They need trusted content. They need clear boundaries around what sources matter, what content is current, and what guidance takes precedence. Without any of the above, you’re not building intelligence into the workflow. You’re scaling ambiguity.

Don’t underestimate change management

This is the part that technical teams often want to rush past. Even when the technology works well, the organizational change is still real. People need to understand how these systems fit into their work, when to rely on them, when to challenge them, and how accountability works when AI is involved.

This is also a change management issue, a combination of training and trust. You need to bring teams along, stage the rollout, establish feedback loops, and clarify where human judgment still matters most. You also need to be honest that even good systems can feel unfamiliar at first.

If you skip that work, people won’t see and experience the technology as helpful. They’ll experience it as one more thing being dropped into their workflow, with insufficient context or support. That’s when the narrative becomes “AI doesn’t work,” when the real problem is that the implementation was sloppy.

How to move from hype to practical adoption

Despite the caution I talked about in the podcast, I’m optimistic about AI agents. I think they represent a meaningful shift in how work can get done. They will become an important part of how organizations operate. Teams that start learning now will be in a much better position than teams that wait until everything feels settled.

Don’t wait for perfection, but pair that with caution about jumping into the deep end too early. That balance is what matters. The organizations that get real value from AI agents won’t be the ones using them the most aggressively early on. They’ll be the ones who understand what an agent is, choose the right place to begin, and build on a foundation of trusted knowledge, strong process discipline, and human judgment.

That path to success is less flashy than following the hype, but it’s also more likely to work.

AJ Sunder

Co-Founder and CTO @ Responsive

A highly experienced Information Security Analyst, Sunder oversees development of advanced SaaS technologies and AI to improve the response process and increase business close rates.