As organizations increasingly rely on artificial intelligence to handle critical business communications, inaccuracies pose real risks. Our Strategic Response Management (SRM) platform uses a multi-layered approach to minimize hallucinations while leveraging generative AI to help organizations win more business and respond faster to mission-critical requests.

How Responsive prevents AI hallucinations

1. Knowledge base grounding

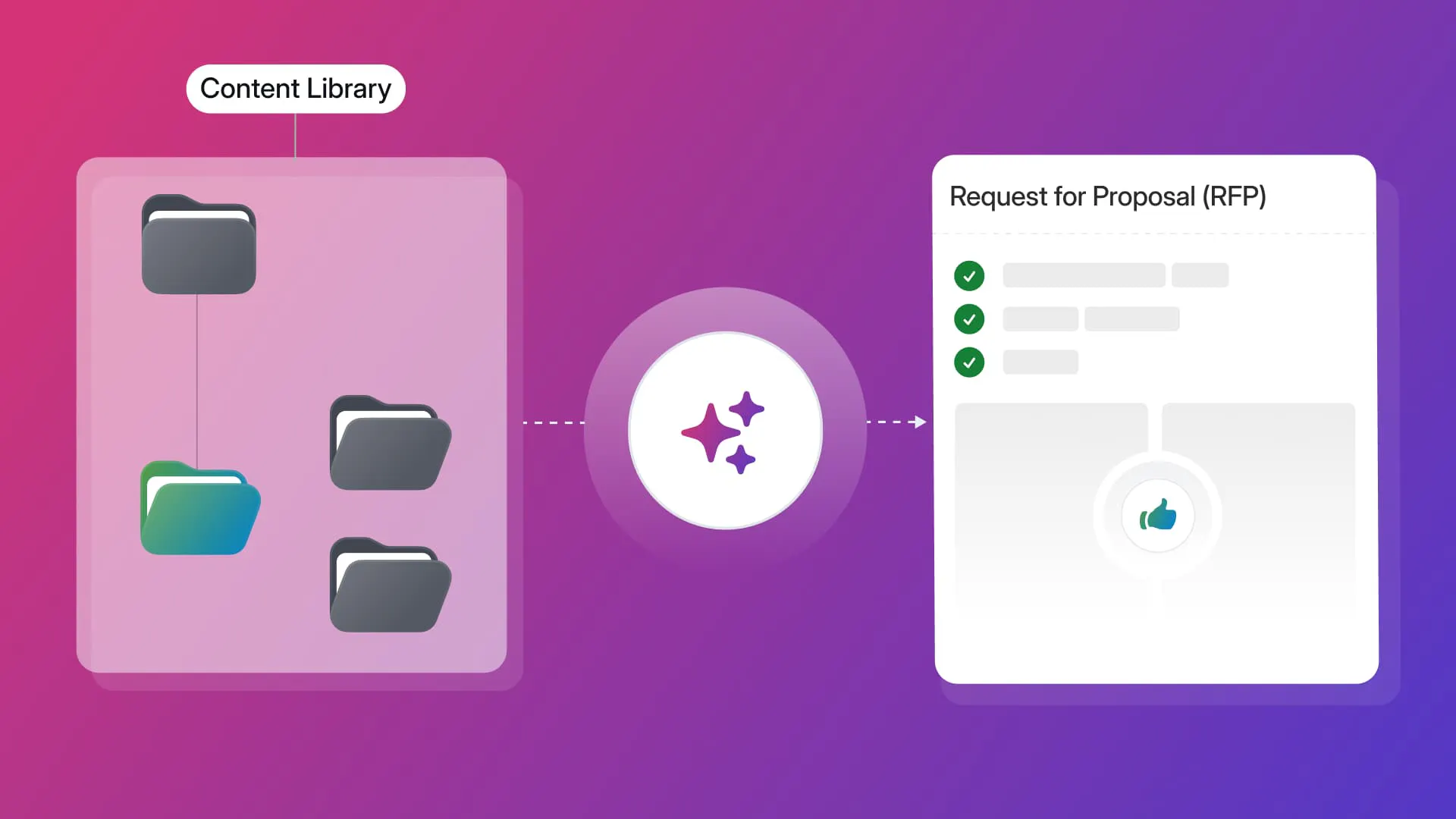

Our hallucination-prevention strategy begins with the Content Library system, which uses Retrieval-Augmented Generation (RAG). Rather than relying solely on the general knowledge embedded in large language models, our AI Assistant sources information directly from customers' curated content libraries.

When generating responses, the AI Assistant pulls from pre-authored, verified content that organizations have carefully developed and maintained. The AI operates on a trusted knowledge base that includes the organization's product details, security practices, compliance information, and other critical business content.

The enhanced AI Assistant sources information from customers' content libraries and, when appropriate, publicly available sources, but always prioritizes the organization's verified internal knowledge. This grounding in trusted sources reduces the risk that the AI will generate inaccurate or misleading information.

2. Source citations

We've implemented automatic source attribution. Our AI agents craft answers "directly from your knowledge base, with source citations and no hallucinations."

Providing clear citations to the original source material serves two goals:

- Transparency: Users can see exactly where the AI pulled its information from, making verification easy

- Accountability: The requirement to cite sources acts as a constraint on the AI, preventing it from generating unsupported claims

When users search the Content Library and use AI Assistant-generated responses, they can see all the references below the generated draft for quick verification of the source material.

Each response also receives a TRACE Score™, an industry-first scoring system that provides an objective, easy-to-understand 0-100 rating across five parameters, from trustworthiness to explainability, along with detailed insights for each response.

Additionally, Responsive Verbatim allows organizations to lock approved phrases, disclosures, and statements that must remain word-for-word, ensuring nothing critical is rewritten or paraphrased by AI.

3. Human-in-the-loop design

Even with advanced AI technology, human oversight remains necessary. Our platform is designed to be "assistive," enhancing user capabilities rather than replacing human judgment.

As stated in our Responsible AI FAQs: "Users must carefully review the content, and make appropriate edits" because "GPT can produce inaccurate or misleading outputs." The platform implements a human-in-the-loop (HITL) philosophy, where users:

- Review AI-generated content before use

- Verify outputs against available information

- Make necessary edits and refinements

- Apply their domain expertise and judgment

While AI accelerates the response process, human expertise remains the final checkpoint for accuracy and appropriateness.

4. Content management and quality control

Responsive has automated content management functions to keep the knowledge base current and reliable. The platform includes technologies to automate the prevention and removal of redundant, obsolete, and trivial (ROT) content, improving productivity and making the content library a reliable source of AI-generated content.

Keeping the content library clean, current, and accurate provides the AI with high-quality source material. Outdated or incorrect information in the knowledge base could lead to AI hallucinations, so this proactive content management serves as an upstream prevention strategy.

The Responsive platform also provides reporting and usage tracking, enabling organizations to monitor content usage, identify knowledge base gaps, and improve the quality of their source material.

5. Privacy and data security measures

Our data privacy practices contribute to the system's overall reliability. We've opted out of allowing OpenAI to use customer data for training public models, so:

- Customer data remains confidential

- Sensitive information cannot leak into public models

- The AI draws only from authorized, controlled sources

This controlled environment helps prevent cross-contamination that could lead to inappropriate or inaccurate responses.

6. Controlled AI behavior

Our implementation of GPT technology through OpenAI's private API (rather than ChatGPT's public interface) provides additional controls. The platform can set parameters, apply filters, and define instructions that guide the AI's behavior within appropriate boundaries for business communication.

Users can set filters at the section level to refine which source materials the AI Assistant will use when generating content, giving fine-grained control over the information the AI can access.

Real-world impact

Customer success stories demonstrate the effectiveness of our approach. Companies using the platform report improvements in response quality and speed without compromising accuracy:

- Microsoft has saved over $17 million while equipping 18,000 sellers and experts with AI-powered content recommendations

- Invicti achieves an 83% win rate while responding 2.5 times faster

- Netsmart accelerates response time by 10 times, enabling the submission of 67% more proposals

These results show that our hallucination prevention strategies work in practice, enabling organizations to leverage AI's speed and efficiency while maintaining the accuracy and reliability required for business-critical communications.

Exploring the broader context

Responsive’s AI approach aligns with industry best practices for preventing AI hallucinations:

- Retrieval-Augmented Generation (RAG): Grounding AI responses in verified external knowledge rather than relying solely on the model's training data

- Source attribution: Providing citations that allow for verification and build trust

- Human oversight: Maintaining human judgment as the final arbiter of quality

- Quality data management: Keeping the knowledge base accurate, current, and comprehensive

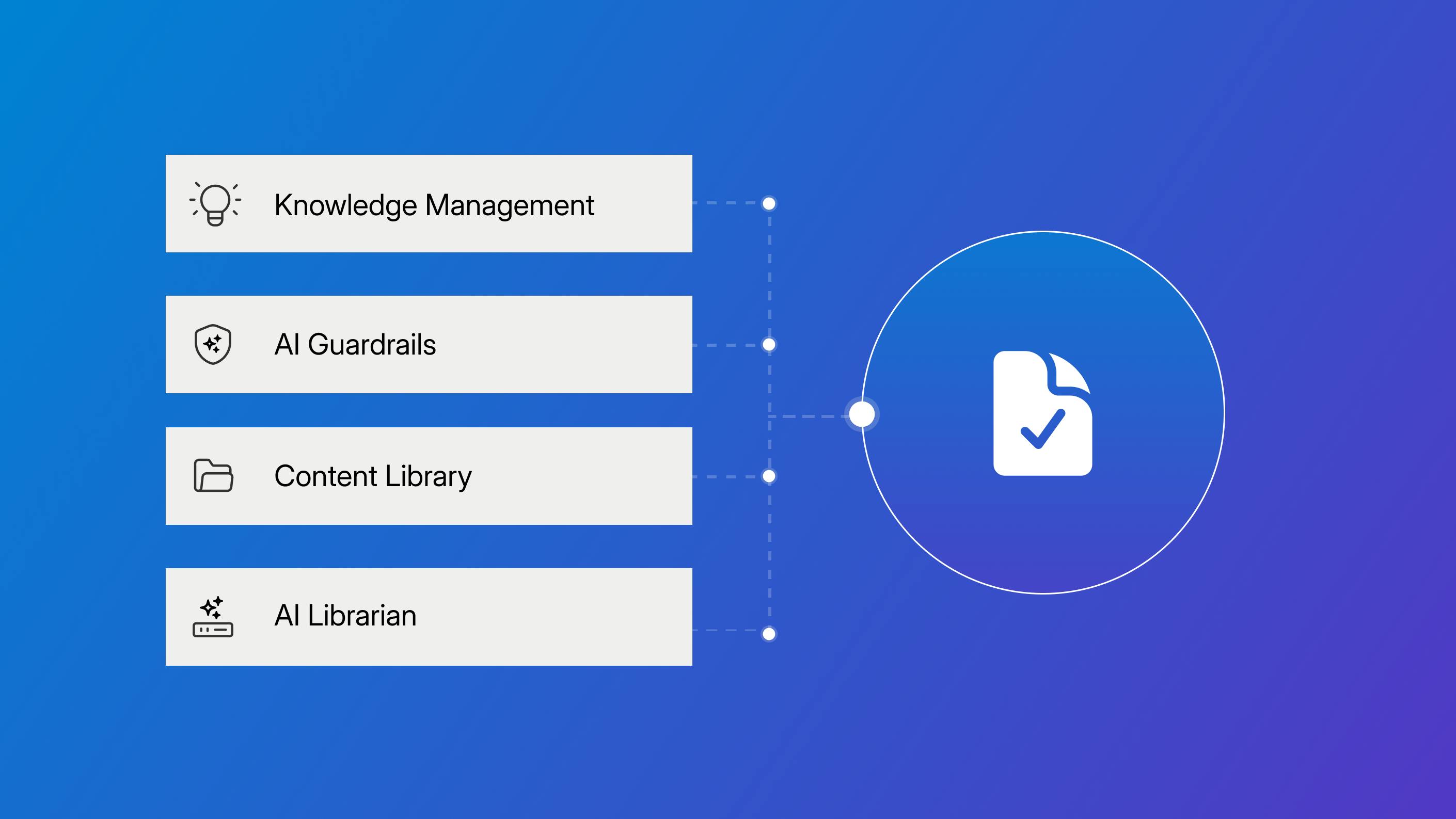

Responsive’s commitment to reliable AI

We've developed a comprehensive strategy to prevent AI hallucinations that combines technical safeguards, human oversight, and rigorous content management. By grounding our AI in verified knowledge bases, providing clear source citations, implementing human-in-the-loop workflows, and maintaining high-quality Content Libraries, the Responsive platform helps organizations harness the power of generative AI while minimizing the risk of inaccurate or misleading information.

As AI continues to transform how organizations handle strategic responses, our approach demonstrates that, with the right safeguards and design principles, you can achieve both the speed and efficiency of AI and the accuracy and reliability critical to business communications. The solution isn't to eliminate AI's limitations entirely—which may not be possible—but to design systems that work with human expertise to produce trustworthy results.

Andrew Martin

Content Marketing Manager @ Responsive

Andrew Martin covers AI adoption, RFP strategy, and proposal management at Responsive, drawing on insights from Responsive's 2,000+ enterprise customer base and original research.